It is standard practice in the field of machine learning to break a dataset into two separate sets. These sets are set for preparation and set for testing. Holding the training and testing data apart is preferable.

Why split?

If we don’t divide the dataset into training and testing sets, then we end up testing our model on the same data and training it. We tend to get good precision when we test on the same data we trained our model on.

This does not, however, mean that the model will work as well on unseen results. This is referred to in the field of machine learning as overfitting.

Overfitting is the case when the training dataset is depicted a little too accurately by your model. This means that it blends too closely into your model.

Overfitting while training a model is an undesirable phenomenon. So there’s underfitting.

Underfitting is when the model is not even capable of representing in the training dataset the data points.

How to split a dataset

Import the dataset

Let’s start by importing a dataset into our Python notebook.

In this tutorial, we are going to use the titanic dataset as the sample dataset. You can import the titanic dataset from the seaborn library in Python.

import seaborn as sns

titanic = sns.load_dataset('titanic')

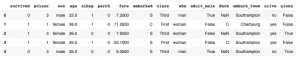

titanic.head()

Input and output vectors

We need to prepare input and output vectors from the dataset before we continue to break the dataset into training and testing sets.

Let’s consider the column ‘Survived’ as an output. This implies that this model will be equipped to predict whether or not a surviving individual will survive.

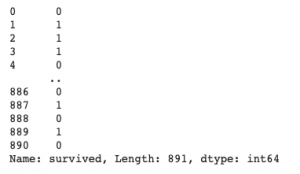

y = titanic.survived print(y)

We also need to remove ‘survived‘ column from the dataset to get the input vector.

x=titanic.drop('survived',axis=1)

x.head()Split ratio

The split ratio is what portion of the knowledge is going to the training set and what portion of it is going to the research set. Almost always the training set is greater than the research set.

70:30 is the most common split ratio used by data scientists.

A 70:30 split ratio means that 70% of the knowledge will go to the training set and 30% of the dataset will go to the testing set.

We will use train test split from the Sklearn library to split the results.

Train-test-split randomly distributes your information according to the ratio given in training and testing.

We will use 70:30 as the split ratio.

We need to import train-test-split from Sklearn first.

from sklearn.model_selection import train_test_split

To perform the split use :

x_train,x_test,y_train,y_test=train_test_split(x,y,test_size=0.3)

Full code

import seaborn as sns

from sklearn.model_selection import train_test_split

#import dataset

titanic = sns.load_dataset('titanic')

#output vector

y = titanic.survived

#input vector

x=titanic.drop('survived',axis=1)

#split

x_train,x_test,y_train,y_test=train_test_split(x,y,test_size=0.3)

#verify

print("shape of original dataset :", titanic.shape)

print("shape of input - training set", x_train.shape)

print("shape of output - training set", y_train.shape)

print("shape of input - testing set", x_test.shape)

print("shape of output - testing set", y_test.shape)Ending Note

If you liked reading this article and want to read more, continue to follow the site! We have a lot of interesting articles upcoming in the near future. To stay updated on all the articles, don’t forget to join us along on Twitter and sign up for some interesting reads!